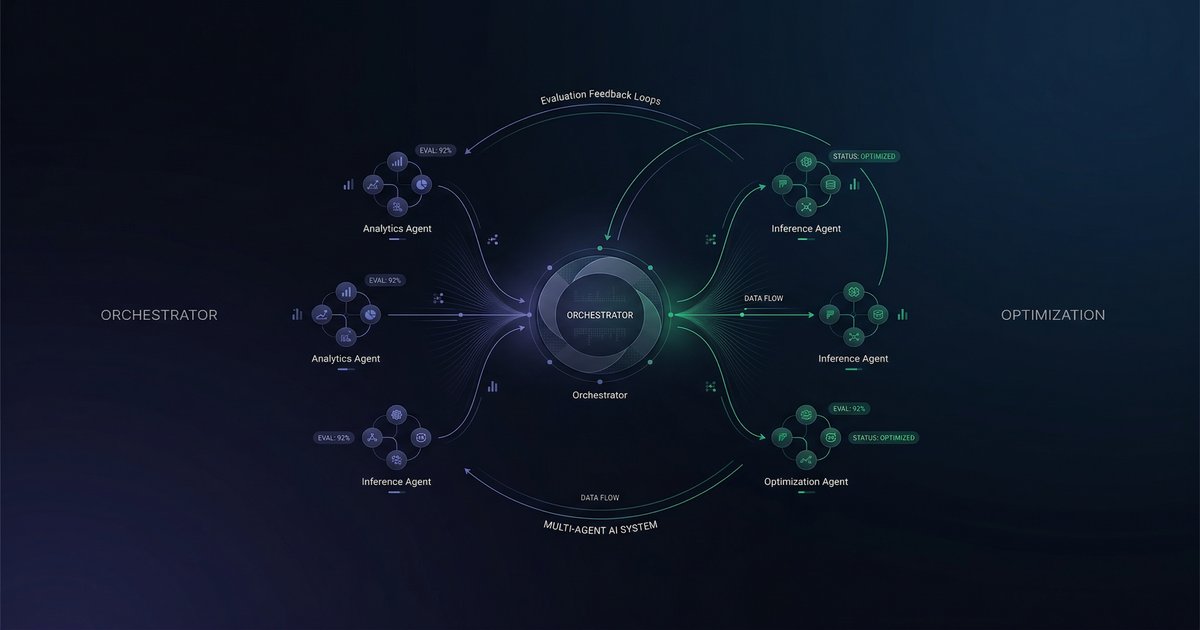

You build a multi-agent system. Each agent is good at its job. But the system as a whole still fails in ways you cannot trace to any single component. The orchestrator withholds context. One agent is too verbose, crowding out another's budget. Retrieved preferences get lost between handoffs. Sound familiar?

A new paper from DoorDash and WithMetis.ai — "Build, Judge, Optimize" (ICLR 2026 MALGAI Workshop) — attacks this problem head-on. They built a production grocery shopping assistant called MAGIC, evolved it from a monolithic single-agent to a modular multi-agent architecture, and then figured out how to continuously improve it. Their key insight: evaluation quality gates optimization quality. Get the judge wrong, and no amount of prompt tuning will help.

This is one of the first papers to formalize end-to-end optimization of tightly coupled multi-agent systems at production scale. Here is what they found and why it matters for anyone building agent teams.

The Problem: Local Improvements, Global Failures

MAGIC started as a single LLM handling everything — intent parsing, product search, personalized ranking, cart management. As features grew, the context window bloated with tool traces, responsibilities interfered with each other, and early ambiguities in user requests propagated silently downstream.

The team decomposed MAGIC into specialized sub-agents behind an orchestrator: a QueryGenerator, an ItemSelector, a preference handler, and others. This improved control and debuggability. But it introduced a new class of problems that only manifest at the system level:

- The orchestrator withholds context from a sub-agent that needs it

- A sub-agent generates verbose output that floods the shared context window

- Personalization data is retrieved correctly but never makes it downstream

- Substitution logic works in isolation but breaks when the user revises mid-conversation

These are coordination failures — invisible to per-agent evaluation, only detectable by looking at the full interaction trajectory. Optimizing each agent independently (even successfully) does not fix them.

Step 1: Build a Judge You Can Trust

Before you can optimize anything, you need a reliable score. The paper's first contribution is a structured evaluation rubric that replaces subjective ratings with grounded boolean checks.

Binary Checks, Not Ordinal Scores

Instead of "rate helpfulness from 1 to 5" (subjective, inconsistent, unreproducible), every evaluation dimension is a concrete yes/no question evaluated against trace evidence:

- Did the agent add the correct number of items to the cart? (Yes/No)

- Were the user's dietary preferences respected? (Yes/No)

- Did the agent provide accurate information about product availability? (Yes/No)

Same trace, same questions, same answers — every time. This gives you a deterministic reward signal you can actually optimize against.

Four Weighted Domains

| Domain | Weight | What It Measures |

|---|---|---|

| Shopping Execution | 50% | Cart completeness, quantity accuracy, no duplicates, overall task success |

| Personalization | 20% | Dietary preferences, preferred brands, context retention across turns |

| Safety & Compliance | 20% | Food safety, content moderation, platform policy alignment |

| Conversational Quality | 10% | Clarification behavior, information integrity, tone, flow |

Certain checks are marked critical — failures that cause the entire trace to fail regardless of other scores. Cart completeness and information integrity are non-negotiable. This enforces hard constraints rather than letting the system trade away correctness for politeness.

Conditional Activation

Not every check applies to every interaction. If the user never mentions dietary preferences, the dietary preference check is not activated. The judge first determines which criteria are applicable to this specific trace, then evaluates only those. This prevents irrelevant checks from diluting the signal — a subtle but important design choice that makes cross-trajectory comparison meaningful.

Step 2: Calibrate the Judge (Meta-Optimization)

Here is where it gets interesting. Even with a well-designed rubric, the raw LLM judge only agreed with human annotators 84.1% of the time. Not terrible, but not good enough to drive an optimization loop — noise in the reward signal means noise in the optimization.

Their solution: optimize the judge itself using the same prompt optimization framework (GEPA) that they would later use on the agents. They fed the judge human-labeled traces, measured disagreements, and iteratively refined the judge's prompts to align with human judgment.

| Domain | Before | After | Gain |

|---|---|---|---|

| Shopping Execution | 90.4% | 95.0% | +5.1% |

| Personalization | 70.8% | 80.2% | +13.2% |

| Conversational Quality | 91.1% | 99.0% | +8.6% |

| Overall (weighted) | 88.5% | 93.5% | +5.0% |

The biggest gains came in Personalization (+13.2%) — exactly the domain where "correct" is most context-dependent. The optimized judge prompt added explicit grounding rules: items only count as "in cart" if they have a selected_item_id, substitutions only count if user-approved, brand specificity matters for attribute matching.

Key insight: Using an optimizer to calibrate the evaluator that will drive downstream optimization is a powerful meta-pattern. If your judge is wrong, every optimization decision built on top of it is wrong too. Invest in judge quality before agent quality.

Step 3: Optimize Individual Agents (Sub-agent GEPA)

With a calibrated judge in hand, the first tier of optimization targets individual sub-agents. The orchestrator provides each sub-agent with a bounded, structured context — which means multi-turn optimization reduces to a single-turn problem per node.

For each sub-agent, the team:

- Extracted invocation-level examples from logged production traces

- Defined micro-rubrics — small sets of binary checks derived from recurring failure patterns, mapped back to the four global domains

- Used GEPA to search prompt variants that maximize micro-rubric scores on a held-out test set

This works well for atomic failures — a sub-agent misinterpreting a quantity, selecting the wrong product variant, or failing to apply a dietary filter. Each of these can be fixed by improving that agent's prompt in isolation.

But sub-agent GEPA has a structural blind spot: it cannot detect or fix problems that emerge from how agents interact.

Step 4: Optimize the Whole System (MAMUT)

This is the paper's most novel contribution. MAMUT (Multi-Agent Multi-Turn) GEPA optimizes a prompt bundle — the complete set of prompts across all agents — against trajectory-level scores.

Instead of asking "is this agent's output good?" it asks "does this combination of agent behaviors produce a good outcome for the user?"

How MAMUT Works

1. Hybrid trajectory simulation. You cannot just replay logged conversations when you change agent prompts, because the agent's behavior diverges from what was logged. MAMUT uses a clever hybrid approach:

- If the optimized agent's action is semantically equivalent to the logged action (verified via natural language inference), replay the real user's next response. This maintains fidelity.

- If the action diverges, a User Persona Agent generates a synthetic response consistent with the original user's constraints and preferences.

2. Joint failure identification. The calibrated judge analyzes full trajectories under the current prompt bundle and identifies cross-agent failure patterns.

3. Safety veto. Any proposed prompt bundle that causes Safety regressions is rejected outright, regardless of improvements elsewhere. Safety is a hard constraint, not a tradeoff.

4. Cross-agent tradeoffs. MAMUT can discover optimizations invisible to per-agent approaches. For example: making the orchestrator more concise so a downstream search agent has more context budget. Neither agent is "broken" individually — the improvement comes from rebalancing resources between them.

Results

Evaluated on 238 held-out trajectories:

| Domain | Sub-agent GEPA | MAMUT | Gain |

|---|---|---|---|

| Shopping Execution | 79.0% | 85.0% | +6.0% |

| Personalization | 80.2% | 87.0% | +6.8% |

| Conversational Quality | 64.0% | 72.0% | +8.0% |

| Safety & Compliance | 76.0% | 88.0% | +12.0% |

| Overall pass rate | 77.1% | 84.7% | +7.6% |

MAMUT outperforms sub-agent GEPA across every domain. The largest gain is in Safety (+12%) — a domain that fundamentally requires cross-agent coordination. The Personalization improvement (+6.8%) was specifically traced to MAMUT optimizing the orchestrator to correctly pass retrieved preferences downstream — a behavior that node-level optimization structurally cannot incentivize.

What This Means for Agent Builders

Whether you are running a production multi-agent system or building one for the first time, this paper offers six concrete takeaways:

1. Evaluation before optimization. Build a reliable evaluation signal first. If your judge is noisy, your optimization will be noisy. The paper demonstrates that investing in judge calibration (84% → 93% agreement) is a prerequisite for trustworthy improvement.

2. Binary checks over vibes. Replace "rate quality 1-5" with grounded boolean checks against trace evidence. You get reproducible scores that work as stable reward signals. This applies to any agent evaluation, not just multi-agent systems.

3. Conditional activation is underrated. Not all checks apply to every interaction. Gate your evaluations on what is actually relevant to each trajectory. This prevents score dilution and makes comparisons meaningful.

4. Per-agent optimization is necessary but insufficient. Sub-agent tuning fixes atomic failures effectively. But coordination failures — context passing, verbosity budgets, preference relay — require trajectory-level optimization. If your agents work fine in isolation but fail together, this is why.

5. Safety as a veto, not a metric. Making safety a hard constraint (reject any change that causes regressions) is more robust than treating it as one dimension to optimize alongside others. You cannot trade safety for helpfulness.

6. The hybrid replay pattern. When evaluating prompt changes: replay real user turns when agent behavior is consistent, synthesize when it diverges. This balances evaluation fidelity with the need to explore behavioral changes.

The Bigger Picture

The paper's title says it all: Build, Judge, Optimize. This is not a one-time setup — it is a continuous loop. You build agents, build a judge, calibrate the judge, use the judge to optimize agents, identify new failure modes, refine the rubric, recalibrate the judge, and keep going.

Most teams stop at "build." The good ones add evaluation. Very few close the loop with systematic, trajectory-level optimization. MAMUT shows what becomes possible when you do: a +7.6% improvement across the board, with the largest gains in exactly the areas (safety, personalization, coordination) where per-agent tuning plateaus.

Multi-agent systems are becoming the default architecture for complex AI applications. The teams that win will not be the ones with the best individual agents — they will be the ones with the best feedback loops.

Paper: "Build, Judge, Optimize: A Blueprint for Continuous Improvement of Multi-Agent Consumer Assistants" — Breen Herrera, Sheth, Xu, Zhan, Wei, Das, Wright, Yearwood. ICLR 2026 MALGAI Workshop. arXiv:2603.03565

Building Your Own Multi-Agent System?

The OpenClaw Field Guide covers agent architecture, sub-agent delegation, quality gates, and production deployment patterns — the practical foundation for everything this paper describes.

Get the Field Guide — $10 →