Here's a problem that most coding benchmarks completely ignore: an LLM generates Python code that runs without a single error, passes syntax checks, and produces output — but the output is wrong in a way no unit test would catch. The code creates a mathematical animation where the gradient descent arrows point the wrong direction. Or the loss curve updates before the parameter change that causes it. The code is technically correct. The visual is pedagogically useless.

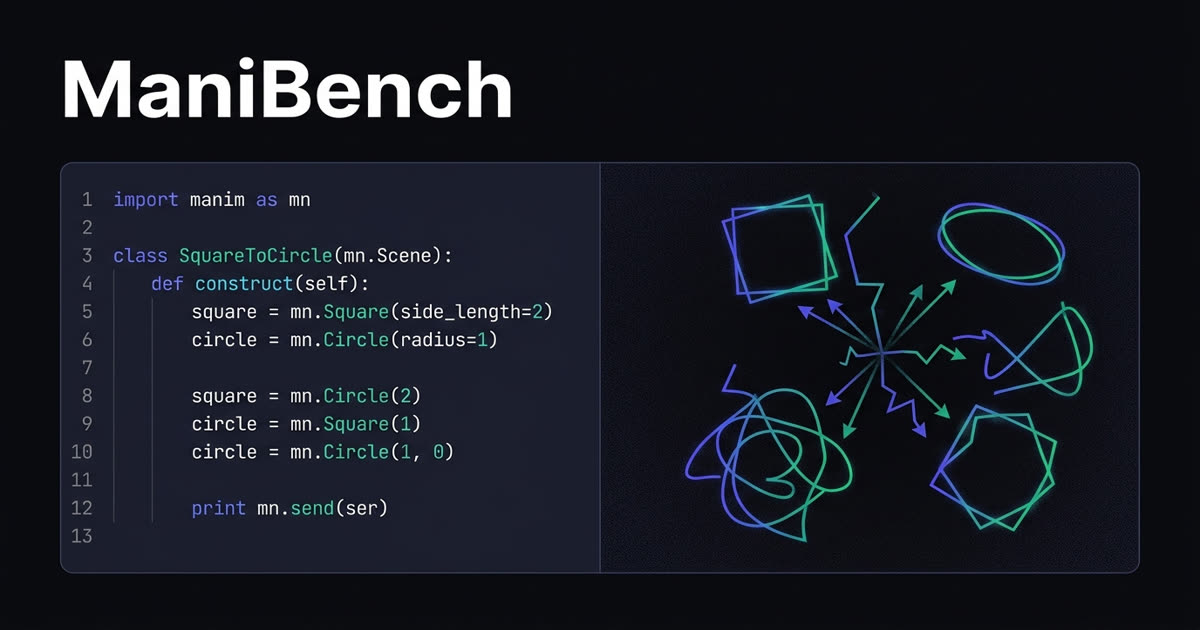

A new paper from researchers at Islington College introduces ManiBench, a benchmark specifically designed to measure this kind of failure in LLM-generated Manim code — the Python animation engine behind 3Blue1Brown's famous math videos.

The Problem: Code That Runs ≠ Code That's Right

Standard benchmarks like HumanEval, MBPP, and SWE-Bench evaluate code generation by asking: does it run? Does it produce the expected output? Does it pass the test suite? That works great for algorithms and data processing. It falls apart when the code's job is to produce dynamic, time-dependent visuals.

ManiBench identifies two failure modes that existing benchmarks miss entirely:

Failure Mode 1: Syntactic Hallucinations

This is when an LLM writes valid Python that references Manim classes, methods, or parameters that don't actually exist — or that existed in a different version of the library.

Common examples:

- Inventing classes — generating

MCircleinstead of the actualCircleclass - Using deprecated methods — calling

mobject.scale()when the current API usesmobject.scale_to_fit_width() - Mixing API versions — combining Manim GL syntax (the original 3Blue1Brown fork) with Manim CE (Community Edition) calls, which crashes the pipeline

- Wrong method signatures — passing arguments that a function doesn't accept

💡 Why is this so common? Manim exists in two major variants — the original ManimGL (Grant Sanderson's personal fork) and Manim CE (Community Edition). LLMs frequently conflate the two, generating code that blends syntax from both. The researchers documented 145 known GL→CE incompatibilities across eight categories.

The insidious part: these hallucinated API calls are grammatically valid Python. Static analysis won't flag them. They only fail at runtime when Manim tries to resolve a class or method that doesn't exist in the installed version.

Failure Mode 2: Visual-Logic Drift

This is the more subtle — and more interesting — failure. The code runs. No exceptions. No import errors. It even produces an animation. But the animation doesn't correctly represent the math it's supposed to teach.

The drift shows up in specific ways:

- Missing visual events — a gradient descent animation that moves the point but doesn't update the corresponding coordinates

- Inverted sequencing — showing the loss curve change before the parameter update that causes it, breaking the causal narrative

- Timing misalignment — animations that run too fast, too slow, or skip pedagogical pauses that make the math digestible

- Hidden derivations — jumping to a final result without showing the intermediate steps that explain how you got there

No existing benchmark measures this. HumanEval doesn't care about temporal semantics. SWE-Bench doesn't evaluate whether a rendered animation correctly communicates mathematical concepts. ManiBench does.

How ManiBench Evaluates

The benchmark uses a four-tier evaluation framework:

- Executability (Pass@1) — does the code run without exceptions or deprecated imports? This is the baseline filter.

- Version-Conflict Error Rate (VCER) — how often does the generated code trigger version-specific errors from mixing GL and CE APIs?

- Alignment Score — a weighted measure of whether required visual events are both present and temporally accurate. Each event gets an importance weight, a presence flag, and a timing accuracy score.

- Coverage Score — a four-dimensional assessment measuring object coverage, transformation fidelity, annotation completeness, and timing precision.

The first two metrics (Executability and VCER) are straightforward to automate. The last two (Alignment and Coverage) are where ManiBench gets interesting — they require evaluating whether the rendered output actually matches the intended mathematical narrative.

The Dataset: 3Blue1Brown as Ground Truth

ManiBench's problems are grounded in analysis of 3Blue1Brown's actual ManimGL source code — approximately 53,000 lines across 143 scene classes and 120 visual techniques. The pilot dataset includes 12 hand-crafted problems spanning five difficulty levels across calculus, linear algebra, probability, topology, and AI concepts.

The benchmark tests six LLMs across five different prompting strategies, measuring how each handles the unique challenges of animation code generation. The automated evaluation pipeline, dataset, and code are all open-source.

Why This Matters Beyond Manim

ManiBench is specifically about Manim, but the concept it exposes — visual-logic drift — is a much broader problem. Any domain where code produces visual, temporal, or interactive output has this same evaluation gap:

- Data visualization — a chart that renders but miscommunicates the trend

- Game development — physics code that compiles but produces unrealistic motion

- UI generation — layouts that render but break user expectations about interaction flow

- Simulation code — models that run but produce results that diverge from the intended physics

As LLMs are increasingly used to generate code in these domains, benchmarks that only check "does it run?" and "does it pass tests?" will systematically miss the most important failures. ManiBench is a step toward evaluation frameworks that care about whether the output is actually correct in the way that matters to the end user.

Links

- Paper: arXiv:2603.13251

- Code & Benchmark: github.com/nabin2004/ManiBench

- Dataset: HuggingFace — nabin2004/ManiBench

ManiBench is a small benchmark (12 problems in pilot) tackling a genuinely underexplored problem. The standard coding benchmarks we use to evaluate LLMs have a blind spot: they can't tell you whether generated visual or temporal code actually communicates what it's supposed to. As AI-generated code increasingly moves into domains like education, visualization, and simulation, we'll need evaluation frameworks that go beyond "it compiles and passes tests." ManiBench is an early but important step in that direction.

Building with AI agents?

Our OpenClaw Field Guide covers everything from setup to advanced agent workflows — the practical guide for getting AI agents running in production.

Get the Guide — $10 →