The async RL training revolution is here, and it's reshaping how we train the next generation of AI models.

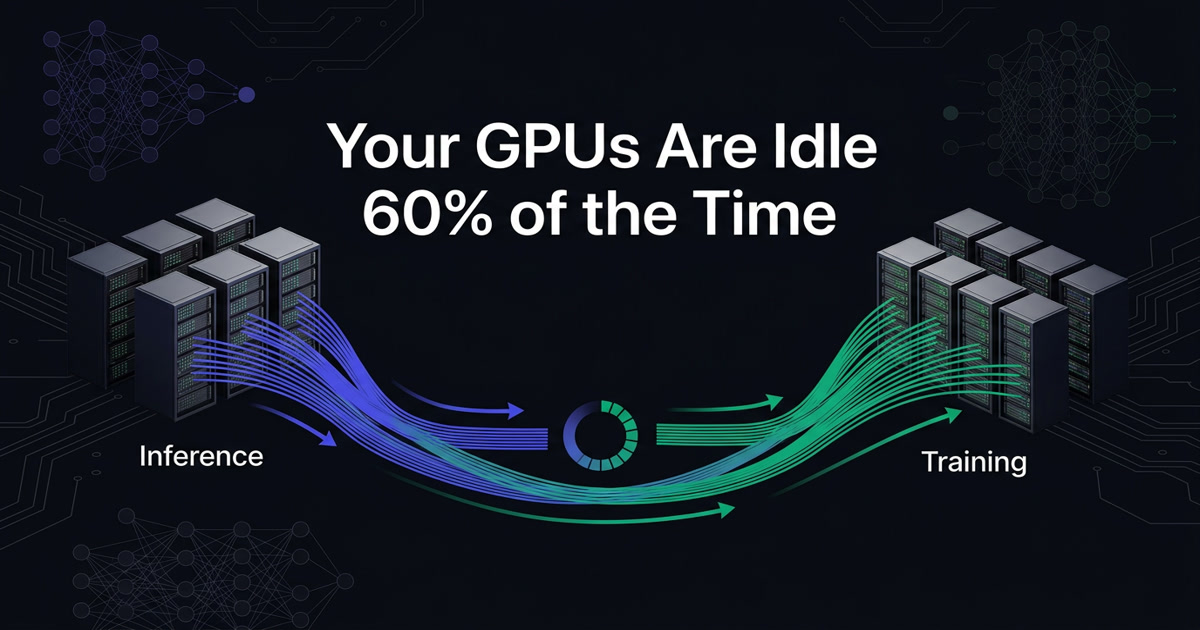

If you're training a reasoning model with reinforcement learning in 2026, there's a good chance your expensive H100 GPUs are sitting idle for more than half the training run. Not because something is broken — because the architecture is.

A new survey from Hugging Face's TRL team examined 16 open-source libraries tackling this problem, and the findings reveal a striking convergence: the entire ecosystem has independently arrived at the same architectural solution. Here's what they found, why it matters, and what it means for the future of AI training.

The Problem: Generation Is the Bottleneck

Modern RL training for language models follows a loop: generate text (rollouts), score it, compute rewards, update the model weights, repeat. The catch? Generation dominates wall-clock time.

Consider a typical GRPO training step on a single H100 GPU:

- Short chain-of-thought (2K tokens): ~3 minutes for a 7B model, ~14 minutes for a 32B model

- Medium CoT (8K tokens): ~11 minutes for 7B, nearly an hour for 32B

- Long CoT (32K tokens): ~45 minutes for 7B, 3.7 hours for 32B

During all of that generation time, the training GPUs sit completely idle. They're allocated, they're drawing power, they're costing money — and they're doing nothing.

The straggler problem makes it worse. With algorithms like GRPO that generate multiple completions per prompt, the entire batch is gated by the slowest completion. One prompt might produce a 1K-token response while another spins out 32K tokens of reasoning. Everyone waits for the slowest one.

The Solution Everyone Converged On

All 16 libraries surveyed arrived at the same core architecture: disaggregated async training. The concept is simple:

- Separate inference and training onto different GPU pools. Inference GPUs generate rollouts continuously. Training GPUs compute gradients continuously.

- Connect them with a rollout buffer. Generated data flows into a queue; the trainer pulls from it.

- Sync weights asynchronously. After each gradient update, push new weights to the inference pool without stopping either side.

Neither pool waits for the other. Generation never stops, training never idles. The buffer absorbs the variance.

This is the opposite of the traditional "colocated" approach where both inference and training share the same GPUs, taking turns. Colocated is simpler and cheaper (fewer GPUs), but it can never achieve true overlap — the two workloads must alternate on the same hardware.

The Seven Axes of Comparison

The Hugging Face team compared all 16 libraries across seven design dimensions. Here are the most interesting findings:

1. Ray Dominates Orchestration

Eight of the sixteen libraries use Ray as their orchestration backbone. This isn't a coincidence — Ray's actor model maps naturally onto RL training's heterogeneous components (inference engines, trainers, reward models, environments). It handles scheduling, fault tolerance, and zero-copy data transfer out of the box.

But not everyone agrees. Libraries like PipelineRL and PRIME-RL chose lightweight Python-native coordination (asyncio, threading, Redis streams) instead. When you control the full stack and your deployment topology is fixed, vanilla Python can be simpler to debug than Ray's distributed runtime.

Meta's Monarch framework is emerging as a PyTorch-native alternative to Ray, purpose-built for GPU workloads.

2. Buffer Depth Controls the Trade-off

The rollout buffer between generation and training is where the magic — and the tradeoffs — happen:

- No buffer (synchronous): Zero staleness, maximum idle time. This is how most training works today.

- Double-buffer (depth 1): The simplest async upgrade. Overlap exactly one generation with one training step. At most one step of policy lag.

- Bounded queue (depth 2–K): Multiple batches in flight. More throughput, but requires staleness management.

- Unbounded stream: Continuous generation with no limit. Maximum throughput, maximum staleness risk.

Most production systems land in the bounded queue range, trading a controlled amount of staleness for dramatically better GPU utilization.

3. Weight Sync Is the Most Consequential Decision

How you get updated model weights back to the inference servers after a gradient update determines your system's character:

NCCL broadcast is the dominant transport mechanism (~100-500ms). ByteDance's verl achieves ~20ms with bucketed NCCL transfers. At the extreme end, some libraries use filesystem checkpoints + HTTP, which is slow but simple.

The more interesting question is when generation pauses to accept new weights:

- Never pause (PipelineRL): Weight swaps happen between individual token decode steps (~1-10ms gap). Sequences never stop. This is qualitatively different from everything else.

- Abort + resync: In-flight requests are killed, partial work is recycled.

- Soft pause (drain in-flight): Stop accepting new requests, let current ones finish, then sync.

- Full batch blocking: Generation must complete entirely before weight sync. The simplest approach, but loses the most overlap.

4. Managing Stale Data Is an Open Problem

When generation and training run in parallel, rollouts are always generated under an older version of the model. Three strategies have emerged:

- Version rejection: Tag each sample with a version number, drop samples that are too old. Simple but wasteful.

- Depth bounding: Limit the buffer size so staleness can't exceed a structural bound. No per-sample tracking needed.

- Importance-sampling correction: Reweight stale samples mathematically instead of discarding them. Preserves throughput but increases gradient variance.

Most production systems combine multiple strategies. PRIME-RL uses all three simultaneously.

5. LoRA Support Is Sparse

Despite LoRA's popularity for fine-tuning, async RL support for adapter-only training is limited. Only a handful of libraries support it, which is a missed opportunity — syncing just the LoRA weights (typically <1% of parameters) could enable sub-millisecond weight transfers.

6. MoE Is the Emerging Differentiator

Mixture-of-Experts models like DeepSeek v3 create a unique challenge: different experts may activate during inference vs. training, causing memory and routing mismatches. Libraries with first-class MoE support (particularly verl, with its Expert Parallel backend) are pulling ahead.

Why This Matters Beyond RL

The async disaggregation pattern isn't just for reinforcement learning. On-policy distillation — where a student model generates sequences and a teacher model scores them — has exactly the same architecture. Swap "reward function" for "teacher forward pass" and every design decision in this survey applies directly.

As the Hugging Face team notes: "Everything in this survey applies equally to async distillation." The infrastructure being built for RL training will power the next generation of distillation pipelines too.

The Agentic Training Scale

The scale of modern agentic RL training is staggering. MiniMax's Forge framework, used to train MiniMax-M2.5, operates at:

- Context lengths up to 200K tokens

- Over 100,000 distinct agent scaffolds and environments

- Daily throughput of millions of samples

At this scale, any synchronous barrier between generation and training idles hundreds of GPUs. The straggler problem alone — a handful of slow rollouts blocking an entire batch — can waste more compute than many organizations use in total.

What's Next

The Hugging Face team is using these findings to build a new async trainer for TRL, one of the most widely used post-training libraries. Their design choices reflect the survey's lessons:

- Lightweight orchestration (no hard Ray dependency)

- Bounded queue with per-token model versioning (no double-buffering)

- NCCL weight sync with packed transfers (~20ms target)

- Partial rollout support for agentic workloads where sequences interact with tools and environments

The async RL training landscape is still young, but the architectural convergence is clear. The question isn't whether to disaggregate inference from training — it's how aggressively to decouple them and how to manage the staleness that comes with it.

Source: "Keep the Tokens Flowing: Lessons from 16 Open-Source RL Libraries" by Amine Dirhoussi, Quentin Gallouédec, Kashif Rasul, Lewis Tunstall, Ed Beeching, Albert Villanova, Nouamane Tazi, Leandro von Werra, and Sergio Paniego at Hugging Face.

Build Faster AI Systems Without Wasting Compute

If you're designing production-grade AI infrastructure, the bottlenecks usually aren't where people expect. Explore our guides for practical strategies on orchestration, deployment, and self-hosted AI systems.

Get the Field Guide — $10 →